New risk class – autonomous agents acting in your name create a broad new category of risk that traditional AI governance – designed for static outputs under human review – does not address. Anthropic has called it too.

Real, unfamiliar damage – agentic risks can often be unclear and cause harm that not even some providers anticipated. Evidence of this reaches the news weekly.

Why now – technology platforms are making agents easily available. Firms face pressure from staff and investors to move fast. Yet, adopting agentic AI before you can control it will give you risk, not advantage.

Why firms need outside assistance – a competitive advantage built on agentic AI is only durable if you can govern it safely. However, laws or not, foreseeable, preventable risks cause financial, reputational, and strategic damage. Redesigning governance for AI agents is a specialist task, and it is what we do.

We have decades of experience in the international investment industry and access to its beating heart through our active membership of the Investment Association. As a result, we tailor our agentic AI risk consulting services for mid-sized asset managers aiming to improve productivity through agentic AI.

Many are preparing to deploy agents and want to assess their readiness, be clear about what they are aiming to achieve, and have a safe path to success. Others have already deployed agents and want peace of mind that they have the risks under control, evidence of their oversight and, if necessary, support for any remediation.

Our associations with the Institute of Risk Management and other industry bodies also give us the knowledge and connections to serve other sectors.

Regulations are an important influence, so we focus on our home region: EMEA. Here, we see both regulated jurisdictions – where AI laws require formal risk management – and less regulated countries – where firms rely on standards, but the risks and harms remain, making governance more, not less, important.

We help firms overcome this in two moves:

Here is our 5-step process for kick-starting your agentic transformation:

Traditional AI governance was designed for static models that produce outputs for humans to review before any action is taken. AI agents operate differently: they act autonomously across systems, accumulate memory across sessions, and can trigger real-world consequences. This changes how risk emerges, how controls fail, and where accountability must sit – none of which existing frameworks were built to handle.

Regulatory compliance sets a legal minimum. Agentic AI governance goes further: it identifies and manages foreseeable, preventable risks that regulations do not yet fully address, produces defensible evidence of oversight for boards and auditors, and ensures that autonomous agent behaviour stays aligned with your firm’s risk appetite. Compliance tells you what you must not do; governance tells you how to operate safely within what is permitted.

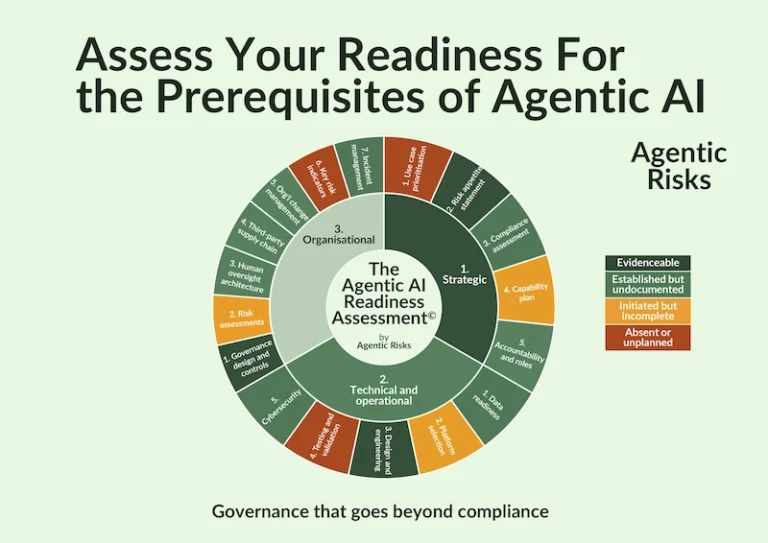

Readiness assessment covers three domains: strategic (clarity on use cases, risk appetite, and adoption strategy), technical and operational (infrastructure, data quality, integration capability, and monitoring), and organisational (accountability structures, training, and change management). Firms whose adoption strategies succeed are those whose roadmaps are grounded in an honest view of their current state across all three.

A risk-based approach means prioritising use cases by the level of risk they carry, applying governance prerequisites proportionately – so lower-risk workflows require fewer controls – and building a roadmap from your actual readiness state rather than an idealised one. It contrasts with permissive access (deploy first, monitor after) and full pre-approval (require all controls before any deployment), sitting between them as the approach that enables progress without sacrificing oversight.

Boards and regulators increasingly expect firms to demonstrate that they have identified the material risks created by autonomous agents, that proportionate controls are in place and tested, that human oversight mechanisms are documented, and that there is a clear accountability structure for AI-related decisions. In the EU regulated jurisdictions, this expectation is being formalised through AI-specific legislation; in less regulated markets across EMEA, the evidential standard is set by best practice and fiduciary duty.

Platform-native governance tools are designed to support deployment, not independent oversight. They cannot provide the objective verification of control effectiveness that boards, auditors, and regulators require – and they create dependency on a vendor whose commercial interests may not align with yours. Independent governance, built on a framework not tied to any platform, gives firms the ability to assess, challenge, and evidence their agentic AI risk management without those conflicts.

We use some cookies - read more in our policies below.

Fill in this form and get access to our

Template Agentic Risk Appetite and Adoption Strategy for free

Fill in this form and get access to the

Enterprise-Wide Agentic AI Controls Framework.

Fill in this form and stay up to date